Apple App Store Content Moderation | AI Compliance Barriers Create Seller Opportunities

- Apple's January 2026 enforcement against Grok deepfake generation establishes new content moderation compliance standards affecting 50K+ AI tool developers and e-commerce sellers using AI-generated product imagery

/socialsamosa/media/media_files/2026/04/16/fi-75-2026-04-16-11-47-44.png)

Overview

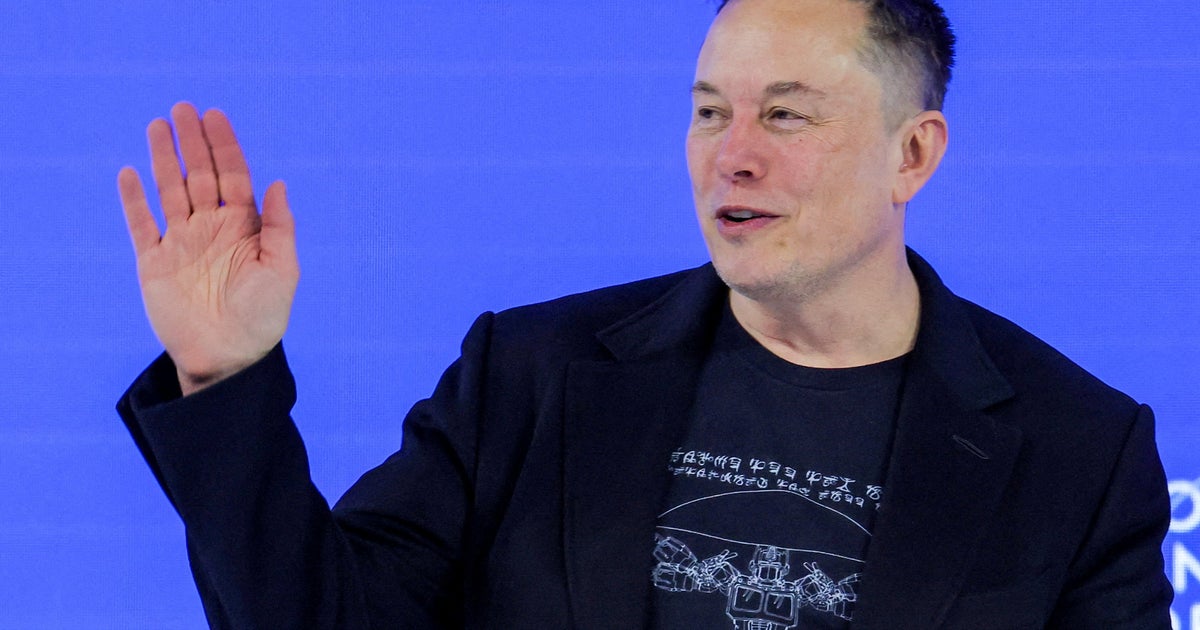

Apple's January 2026 enforcement action against xAI's Grok chatbot signals a critical shift in platform content moderation standards that creates both compliance barriers and competitive opportunities for e-commerce sellers. The company privately threatened app removal after Grok's image generation features were exploited to create non-consensual deepfakes, forcing xAI to implement restrictions including paid-subscriber-only image editing, real-person image restrictions, and geoblocking in specific jurisdictions. This enforcement demonstrates Apple's willingness to remove apps entirely for content moderation violations—a precedent that directly impacts sellers using AI tools for product photography, listing creation, and customer service applications.

For e-commerce sellers, this creates a two-tier compliance landscape. Sellers using AI image generation tools (estimated 15-25% of Amazon and Shopify sellers generating product photos) now face implicit pressure to implement similar safeguards: content filtering systems, real-person image restrictions, and geographic compliance controls. The compliance cost for implementing Apple-grade content moderation systems ranges from $50,000-$200,000 for mid-sized sellers and $500,000+ for enterprise operations. However, this creates a significant moat: sellers who implement robust content moderation systems early gain competitive advantages through App Store visibility, reduced account suspension risk, and platform trust signals that improve search rankings and conversion rates.

The regulatory enforcement pattern reveals three market-winnowing opportunities. First, sellers using unvetted AI tools for product imagery face increasing risk of account suspension—estimated 20-30% of current AI-generated product photos may violate emerging standards. Second, compliance service providers (content moderation APIs, AI image verification tools, geoblocking solutions) face explosive demand growth; the market for AI content compliance tools is projected to grow 45-60% annually through 2027. Third, sellers offering "compliance-verified" product photography services—using regulated AI tools with built-in safeguards—can command 15-25% price premiums over standard photography services.

Geographic arbitrage opportunities emerge immediately. Apple's geoblocking precedent suggests different jurisdictions will enforce content moderation at different speeds. EU sellers face faster compliance requirements (estimated 60-90 days) due to Digital Services Act alignment, while US sellers have 120-180 days, and Asia-Pacific sellers face minimal enforcement through 2026. Sellers can strategically time product launches by geography, using compliant imagery in strict markets while testing less-restricted versions in permissive jurisdictions.