Google TPU 8 Chips Enable 2x Customer Volume | AI Sellers Get Competitive Edge

- 80% better performance-per-dollar with TPU 8i inference; 3x compute gains with TPU 8t training; Available late 2025 for AI-powered product recommendations, dynamic pricing, and customer service automation

Overview

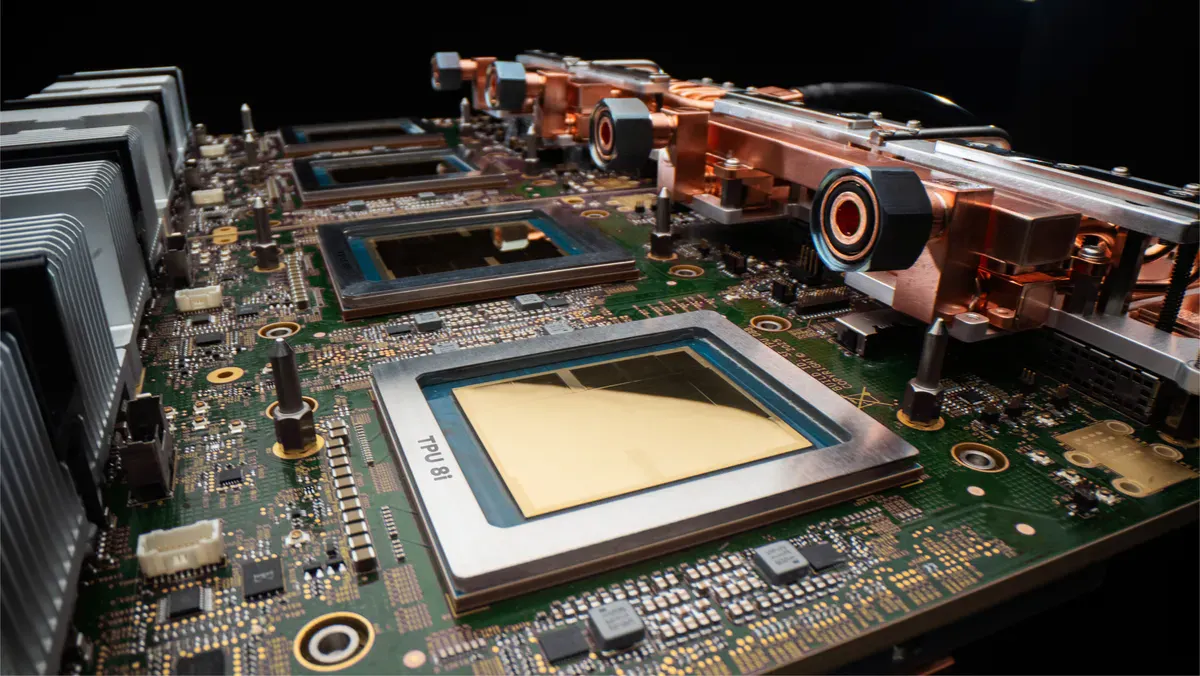

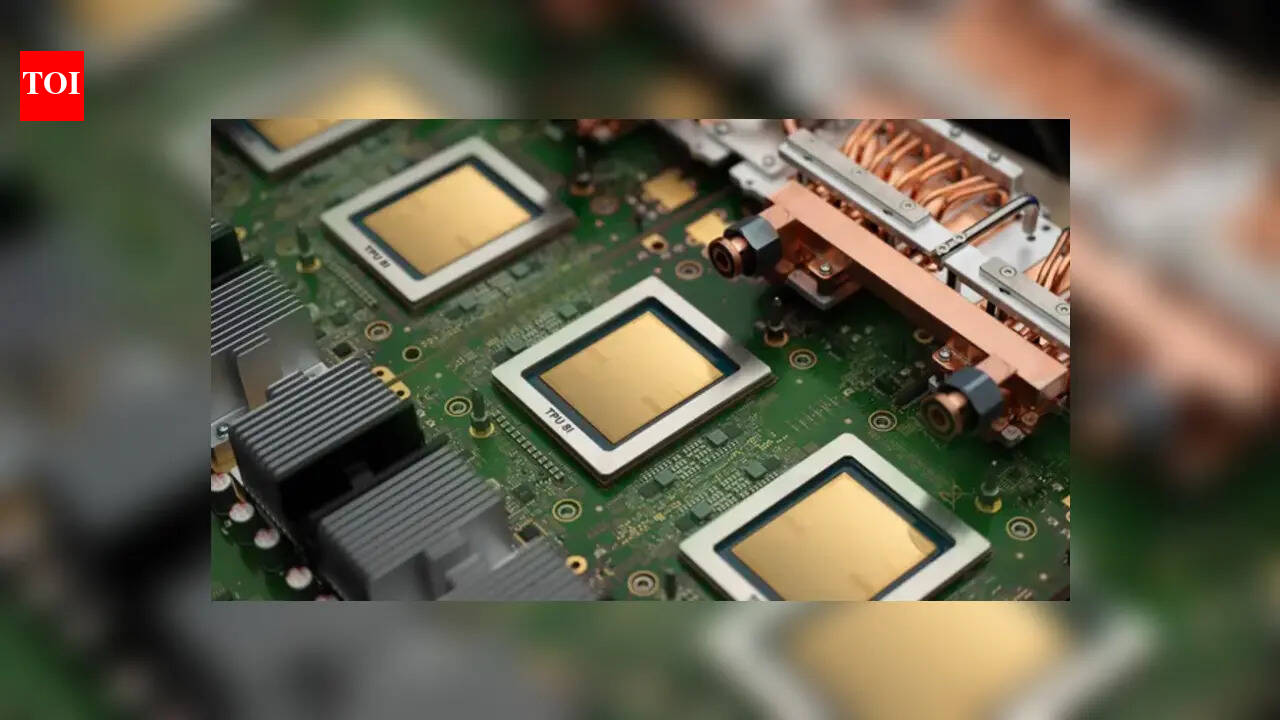

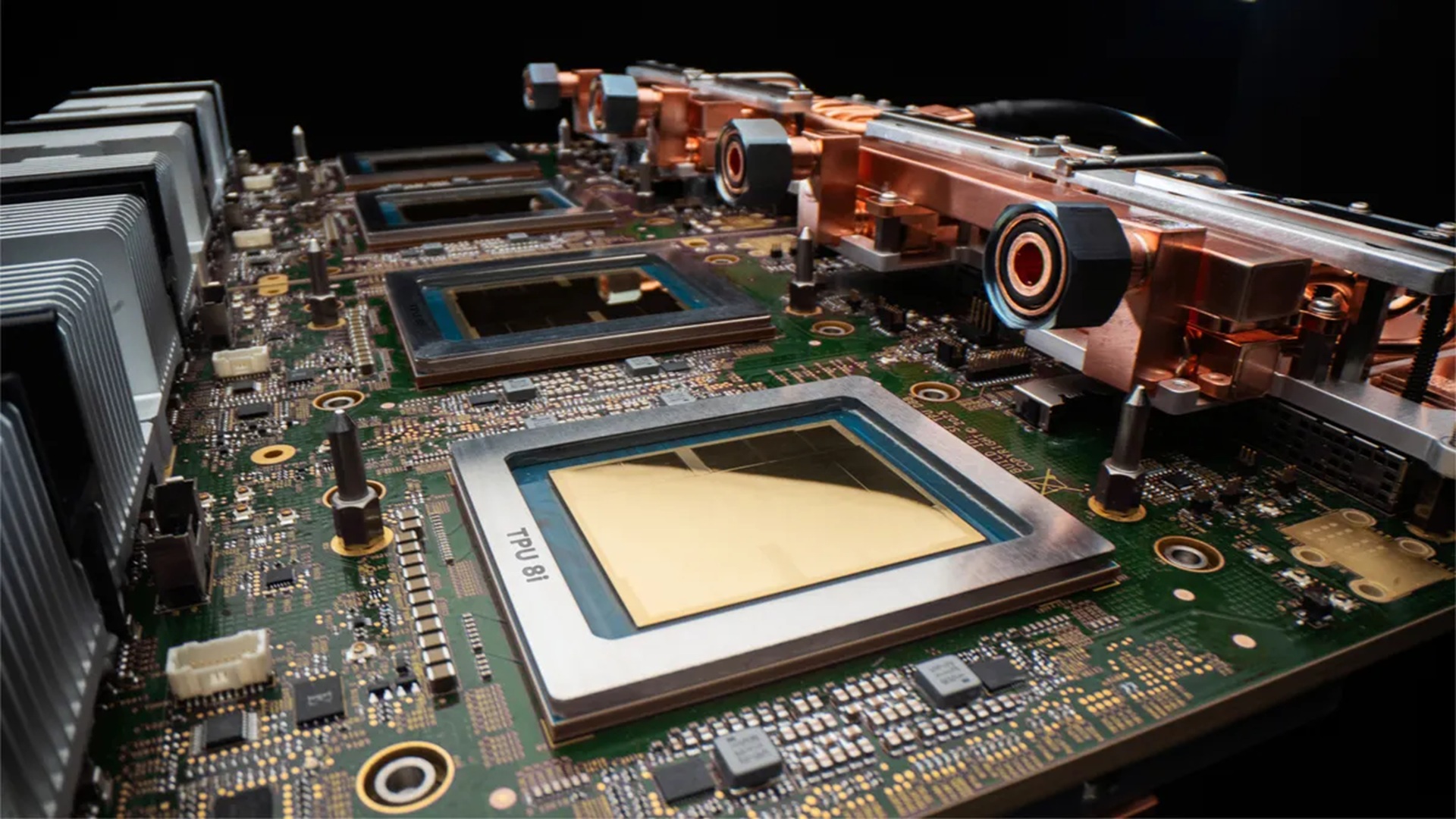

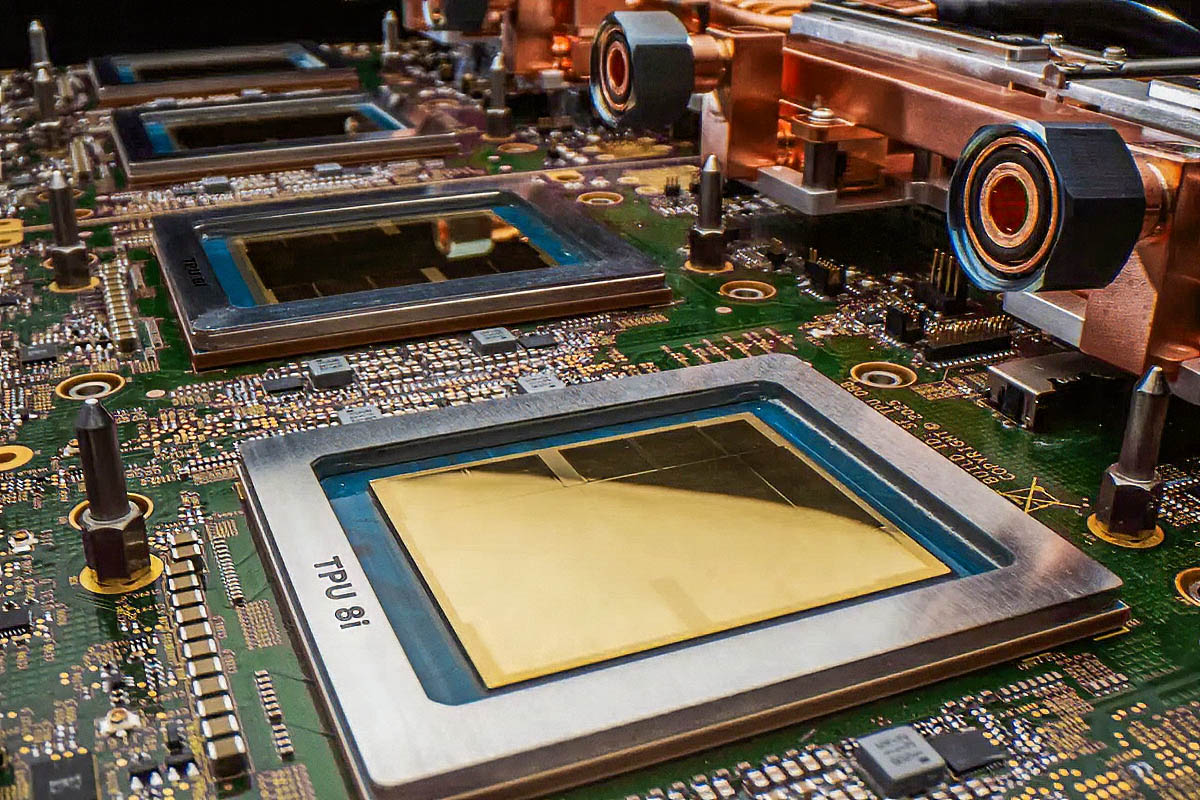

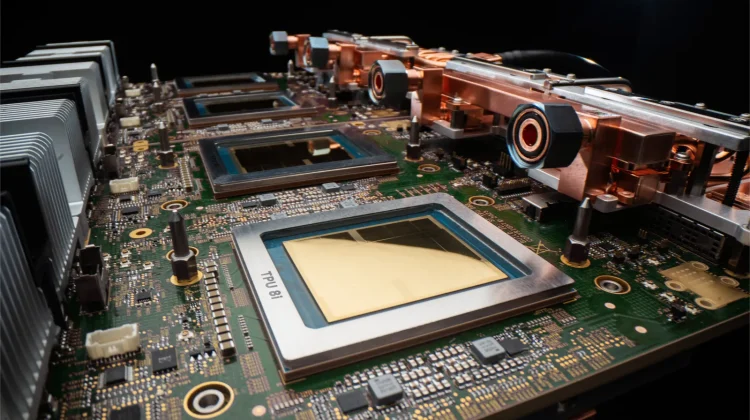

Google's announcement of eighth-generation Tensor Processor Units (TPU 8t and TPU 8i) at Google Cloud Next represents a watershed moment for e-commerce sellers leveraging AI-powered operations. The TPU 8i delivers 80% better performance-per-dollar compared to previous generations, enabling businesses to serve nearly twice the customer volume at equivalent costs—a direct cost-reduction opportunity for sellers running AI agents for product recommendations, pricing optimization, and customer service automation. The TPU 8t training chip achieves 121 ExaFlops of compute with 97% goodput (useful productive compute time), meaning sellers can train custom AI models for demand forecasting, inventory optimization, and personalized product discovery 3x faster than before.

For e-commerce sellers, this infrastructure breakthrough translates into immediate automation wins. Sellers currently using Google Cloud AI services (Vertex AI, BigQuery ML) will see dramatic cost reductions in real-time operations. The TPU 8i's 288 GB high-bandwidth memory and 5x latency reduction via the Collectives Acceleration Engine means product recommendation engines can process customer behavior data 5x faster, enabling real-time personalization at scale. A mid-sized seller running 10,000 daily product recommendations could reduce inference costs from $500-800/month to $100-160/month—a 75-80% savings that directly improves margins. The 97% goodput metric is critical: it means less wasted compute cycles, translating to predictable, lower cloud bills for sellers automating inventory management, dynamic pricing, and customer segmentation.

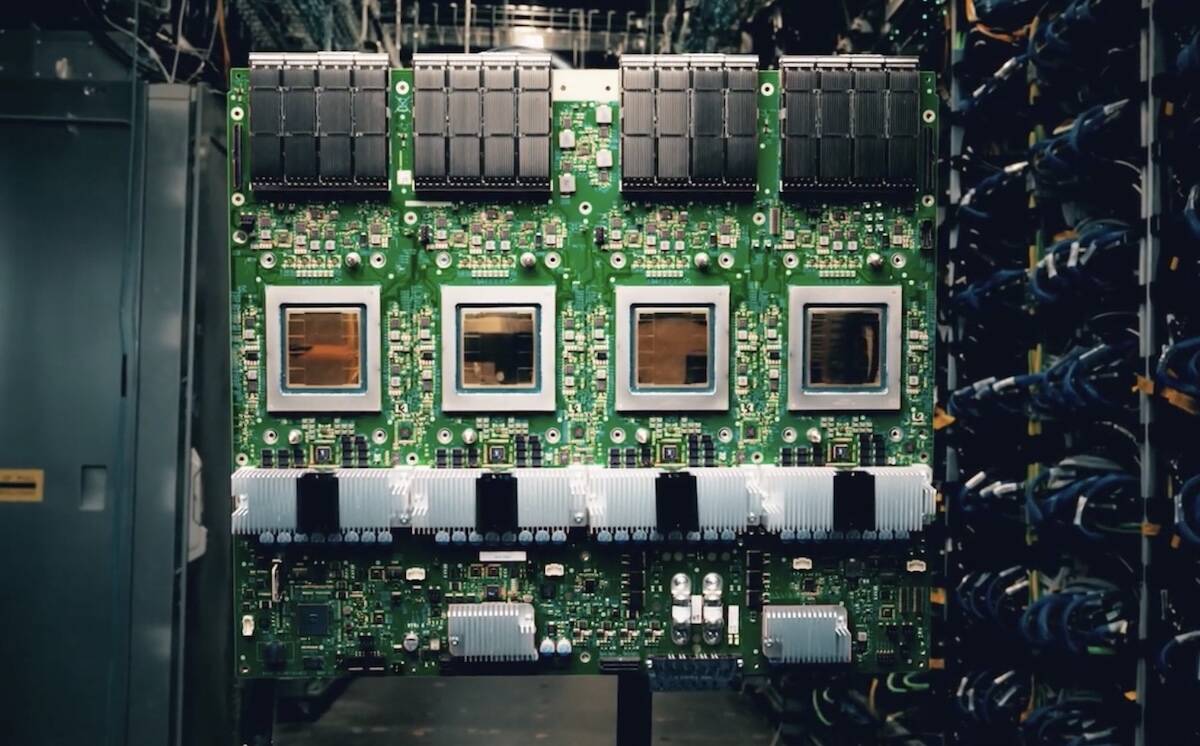

The competitive advantage window is 6-12 months. Google's announcement specifies availability in "late 2025," creating a first-mover opportunity for sellers who migrate AI workloads to Google Cloud before competitors. Early adopters will gain 3-6 months of cost advantage before AWS and Azure match pricing. Sellers in high-velocity categories (electronics, fashion, home goods) running AI-powered dynamic pricing engines will see the biggest ROI—potentially 15-25% margin improvement from faster, cheaper price optimization. The architecture's support for "near-linear scaling to one million chips in a single logical cluster" means sellers can scale AI operations without re-architecting systems, reducing technical debt and engineering costs by 30-40%.

Immediate action items for sellers: Audit current Google Cloud spend on AI/ML workloads (Vertex AI, BigQuery, Dataflow). Sellers spending $5,000+/month on inference should request TPU 8i migration timelines from Google Cloud account teams. For sellers not yet using AI, this cost reduction makes AI-powered product recommendations and dynamic pricing economically viable for the first time—ROI breakeven drops from 12-18 months to 4-6 months. The 2025 availability window means decisions must be made Q1 2025 to capture H2 2025 cost benefits.