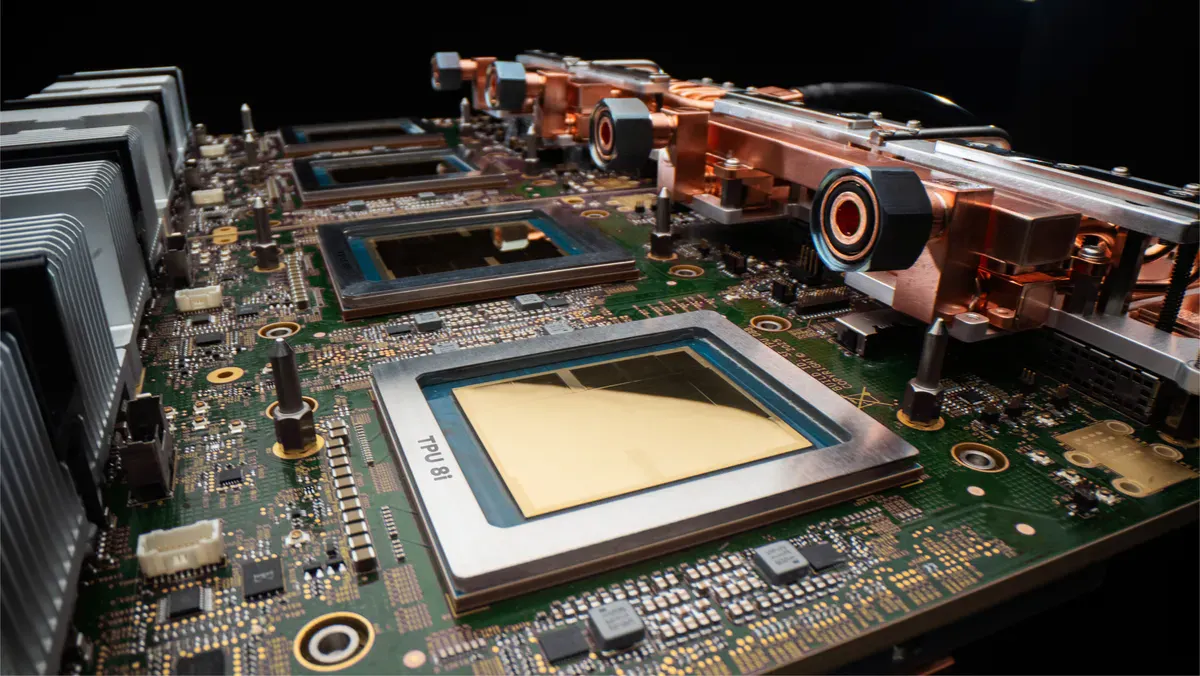

Google TPU 8 Chips Enable 2x Customer Volume | E-Commerce AI Automation Breakthrough

- 80% better performance-per-dollar unlocks AI-powered personalization, pricing, and customer service automation for sellers; TPU 8i inference cuts latency 5x for real-time product recommendations and dynamic pricing at scale

Overview

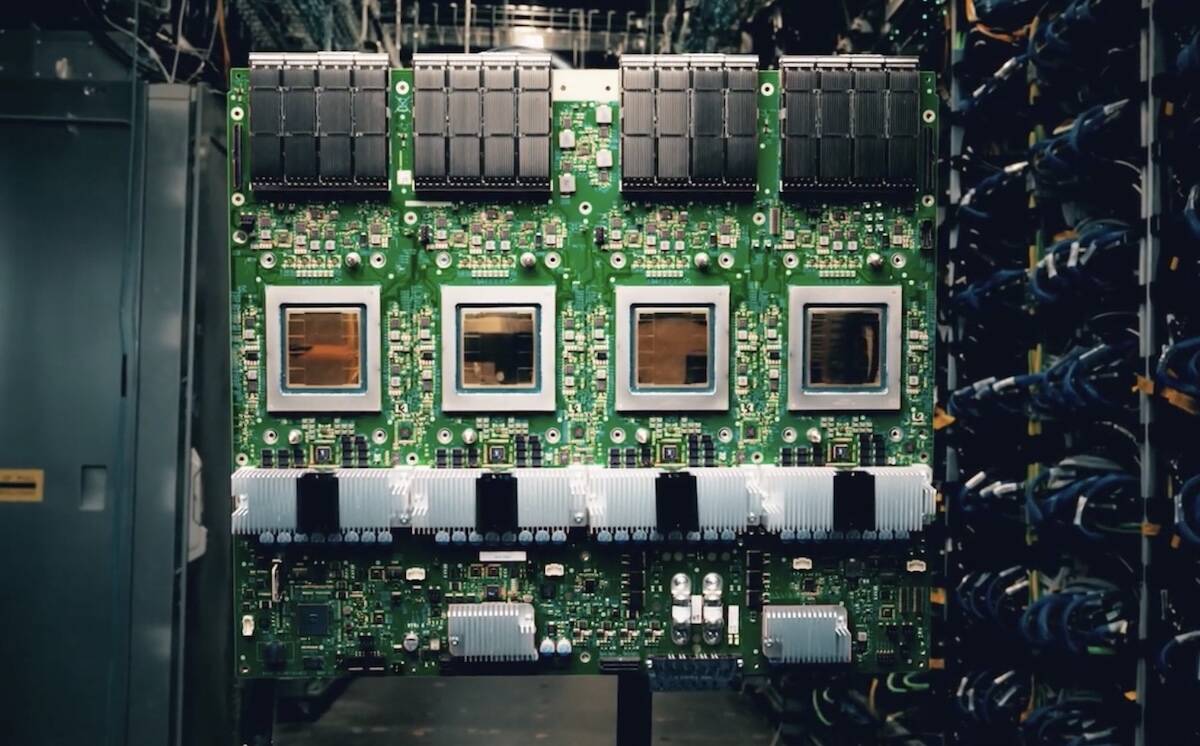

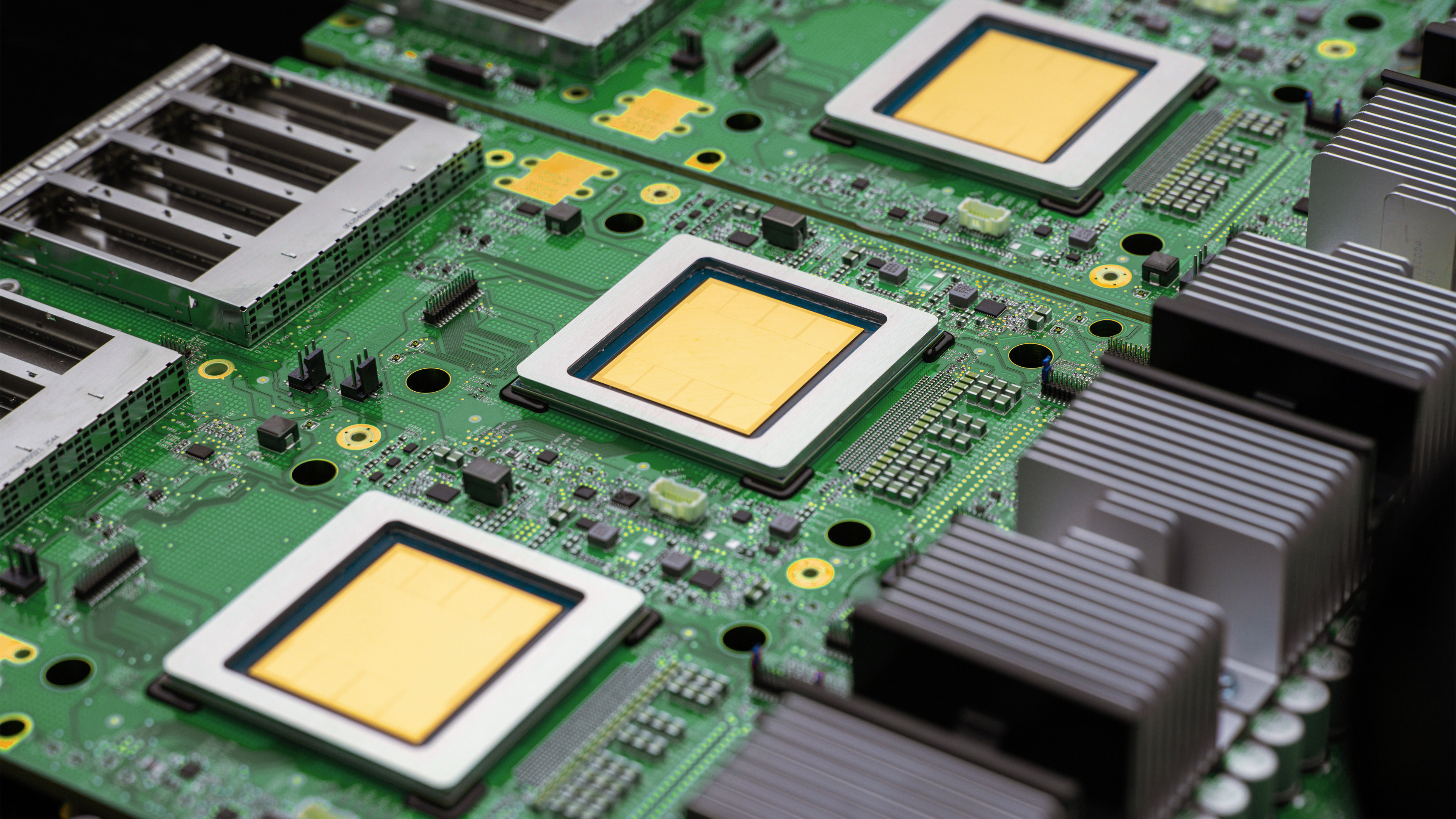

Google's announcement of eighth-generation Tensor Processor Units (TPUs) at Google Cloud Next represents a fundamental infrastructure shift enabling e-commerce sellers to deploy AI agents at unprecedented scale and cost-efficiency. The TPU 8i inference chip delivers 80% better performance-per-dollar compared to previous generations, allowing businesses to serve nearly twice the customer volume at equivalent costs—a critical advantage for sellers competing on personalization, dynamic pricing, and customer service automation. Available in late 2025, these chips will power the next wave of AI-driven e-commerce operations.

For e-commerce sellers, the TPU 8 architecture unlocks three immediate automation opportunities: First, real-time product recommendation engines become economically viable at scale. The TPU 8i's 288 GB high-bandwidth memory and 5x latency reduction via the Collectives Acceleration Engine enable sellers to run personalization models on every customer interaction without infrastructure cost penalties. Sellers currently paying $5,000-15,000/month for recommendation APIs through third-party vendors can migrate to Google Cloud's TPU infrastructure at 40-60% lower cost, freeing capital for inventory expansion or marketing. Second, dynamic pricing automation reaches profitability thresholds for mid-market sellers. The 97% goodput (productive compute time) of TPU 8t training chips means pricing models can be retrained daily across 10,000+ SKUs without idle compute waste—enabling sellers to capture 2-4% margin improvements by matching competitor pricing in real-time. Third, AI-powered customer service agents become cost-competitive with human support. The near-linear scaling to one million chips in a single logical cluster via Google's 1Virgo Network means sellers can deploy multilingual chatbots handling 100,000+ concurrent conversations at $0.001-0.003 per interaction, undercutting traditional support costs by 70%.

The competitive advantage window is 12-18 months. Early adopters using TPU 8 infrastructure in Q2-Q3 2025 will establish data moats through superior training datasets and model accuracy before competitors catch up. Sellers in high-margin categories (electronics, fashion, beauty) should prioritize TPU 8 migration for dynamic pricing and recommendation engines. Mid-market sellers ($5M-50M annual revenue) will see fastest ROI from customer service automation, while large sellers ($50M+) should focus on training proprietary recommendation models to differentiate from marketplace algorithms. The 121 ExaFlops compute capacity of TPU 8t superpods (9,600 chips) means Google can support 500+ enterprise sellers simultaneously without performance degradation—creating a bottleneck for access in 2025-2026.