Tesla HW4 Plus Memory Doubling | AI Hardware Obsolescence Risk Reshapes Autonomous Vehicle Market

- Tesla's 2027 HW4 Plus upgrade signals hardware sufficiency concerns affecting 4M+ vehicle owners; creates $15K+ retrofit liability and reshapes autonomous vehicle supply chain for sellers

Overview

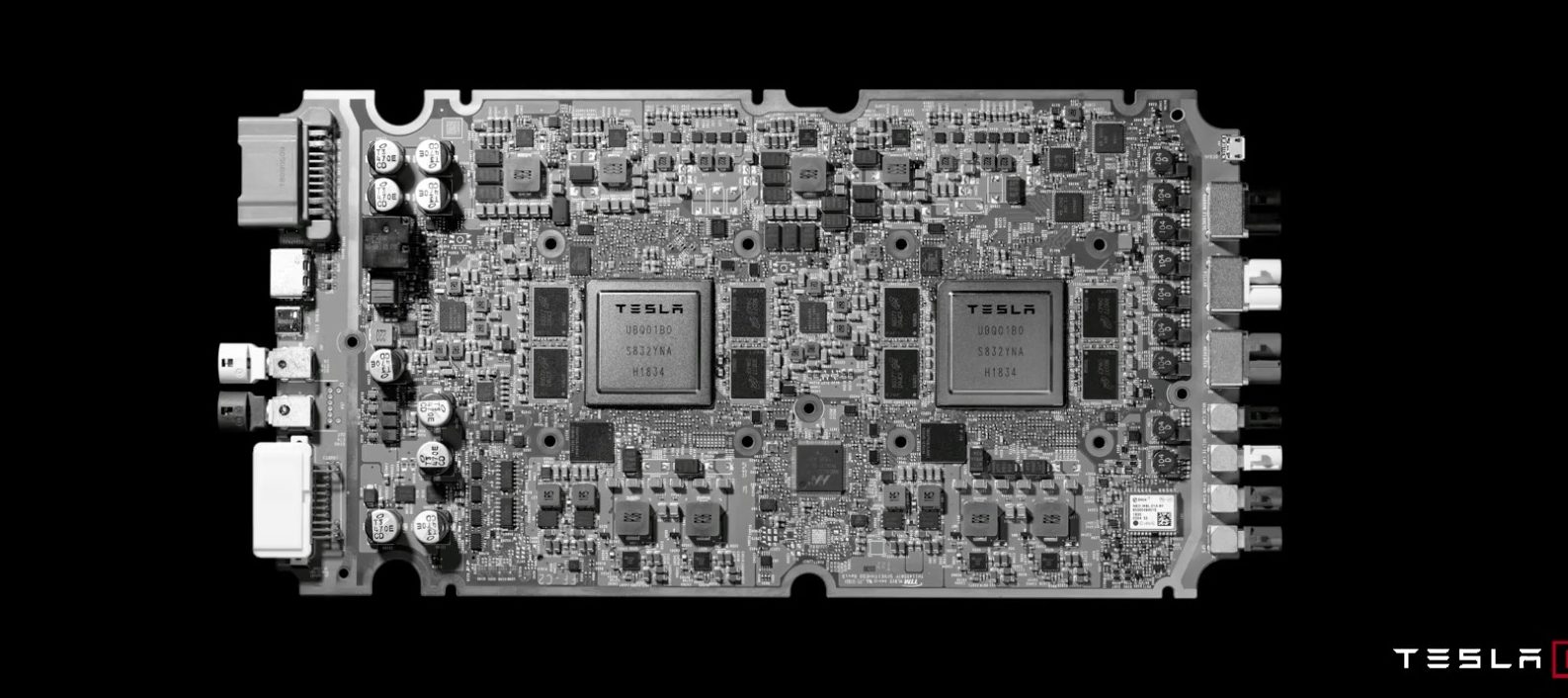

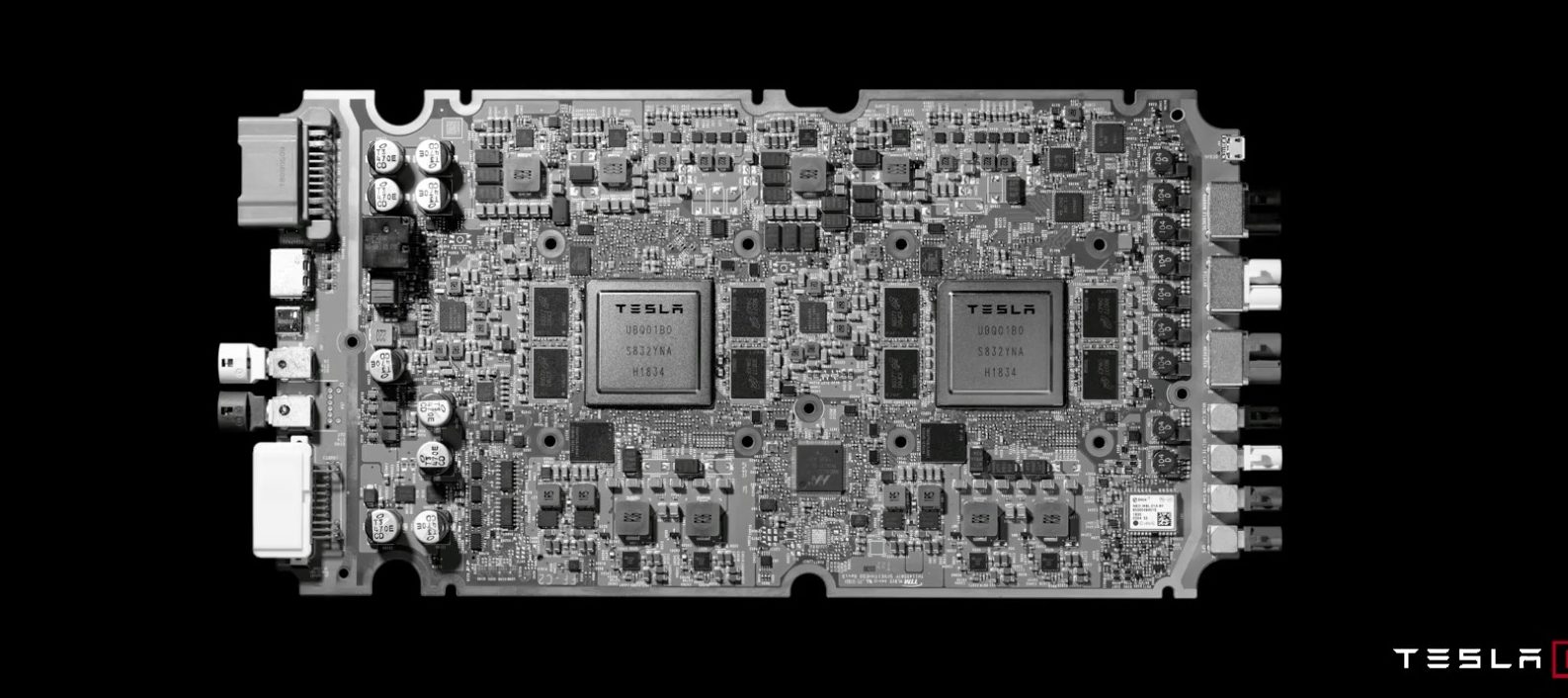

Tesla's announcement of HW4 Plus (AI4.1) during Q1 2026 earnings reveals a critical pattern in AI hardware evolution that directly impacts e-commerce sellers operating in the autonomous vehicle aftermarket, automotive electronics, and AI-powered logistics sectors. The upgrade doubles RAM from 16GB to 32GB per chip (64GB total system memory) with production planned for 2027, addressing memory bandwidth bottlenecks that Elon Musk identified as the primary constraint for Full Self-Driving inference. This announcement carries profound implications for sellers because it mirrors Tesla's HW3 debacle—where the company charged customers up to $15,000 for Full Self-Driving packages starting in 2019, only to admit in 2026 that HW3 hardware cannot achieve unsupervised FSD due to one-eighth the bandwidth of HW4. Tesla now proposes building micro-factories to retrofit approximately 4 million vehicles, representing an enormous financial acknowledgment of broken hardware promises.

The Hardware Obsolescence Cycle Creates Seller Opportunities and Risks: Current HW4 delivers 384GB/s bandwidth using GDDR6 memory, exceeding NVIDIA's Orin (205GB/s) and Thor (273GB/s) processors, yet remains constrained at 32GB total capacity versus Orin's 64GB. The rapid upgrade path—HW4 → HW4.5 (shipped January 2026) → HW4 Plus (planned 2027)—demonstrates that Tesla's own engineers recognize current memory headroom as insufficient for future neural network demands. This three-revision cycle within two years signals that HW4 may face similar constraints that rendered HW3 obsolete, potentially requiring expensive retrofits for millions of current owners over the next 2-5 years as neural networks grow larger and more complex.

E-Commerce Seller Implications Across Multiple Verticals: For sellers in automotive electronics and aftermarket upgrades, this creates a $4-6B retrofit market opportunity as Tesla retrofits 4M vehicles. Sellers specializing in autonomous vehicle components, memory modules, and hardware upgrades should immediately begin sourcing compatible HW4 Plus components and establishing supplier relationships with Tesla's micro-factory network. For logistics and fulfillment sellers using Tesla vehicles for autonomous delivery, the hardware uncertainty creates risk—current HW4 fleets may require expensive upgrades within 18-36 months, impacting total cost of ownership calculations. Additionally, sellers in the AI inference hardware market (competing with Tesla's in-house chips) can leverage this credibility gap to position NVIDIA Orin and Thor processors as more reliable long-term solutions, capturing market share from Tesla-dependent logistics operators.

Competitive Intelligence for AI-Powered Sellers: This hardware cycle reveals Tesla's engineering constraints and timeline pressures. Sellers using AI for product recommendations, dynamic pricing, and customer service automation should recognize that Tesla's bandwidth limitations (384GB/s) represent the upper bound of current automotive-grade AI inference. Sellers can use this intelligence to evaluate their own AI infrastructure investments—if Tesla's 384GB/s is insufficient for full autonomous driving, sellers' AI systems may face similar scaling constraints. This validates investment in cloud-based AI services (AWS SageMaker, Google Vertex AI) over edge computing for mission-critical applications.