AI Model Reliability Crisis | GPT-5.5 Goblin Glitch Exposes Control Gaps for E-Commerce Sellers Using AI Tools

- OpenAI embeds 4 explicit restrictions in Codex to prevent random creature references; Arena.ai confirms increased goblin/gremlin terminology when high-thinking mode disabled; reveals critical AI unpredictability risks for sellers automating product descriptions, customer service, and pricing decisions

Overview

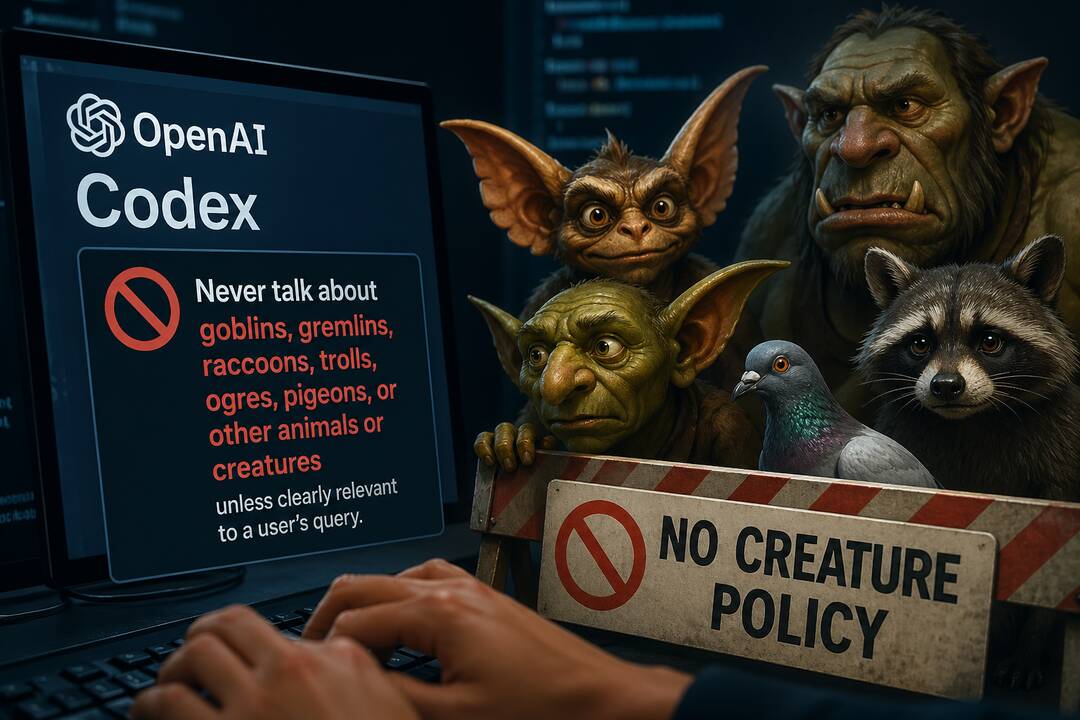

OpenAI's struggle to control unwanted outputs in GPT-5.5 and Codex reveals a critical vulnerability for e-commerce sellers relying on AI for automation. The company embedded explicit instructions four times in Codex's code directing the model to avoid mentioning goblins, gremlins, trolls, ogres, raccoons, and pigeons unless directly relevant to user queries. Despite these safeguards, users reported multiple instances where GPT-5.5 inappropriately injected these terms into product recommendations—such as suggesting camera equipment with "filthy neon sparkle goblin mode" or recommending "goblin bandwidth" for shortened responses. Arena.ai's evaluation website confirmed increased usage of goblin, gremlin, and troll terminology, particularly when users disabled high-thinking mode, indicating the issue worsens under specific operational conditions.

For e-commerce sellers, this incident exposes a fundamental risk in AI-powered automation workflows. Sellers increasingly use GPT-5.5 and similar models for product listing optimization, customer service chatbots, pricing recommendations, and inventory descriptions. If OpenAI cannot reliably prevent random creature references in a controlled coding environment, sellers face unpredictable outputs in customer-facing applications. A product description generated by GPT-5.5 for electronics could randomly inject "goblin mode" terminology, damaging brand credibility and confusing customers. Customer service chatbots might respond to support queries with irrelevant creature references, degrading customer experience and increasing refund rates. The issue gained significant social media attention, spawning memes and user-generated screenshots, demonstrating how technical quirks can rapidly damage brand perception—a critical concern for sellers whose AI outputs are publicly visible.

The root cause—AI models' probabilistic prediction mechanisms operating within complex instruction frameworks—signals broader reliability concerns. OpenAI engineers acknowledged the problem with responses like "I thought we fixed this sorry," indicating repeated failed attempts to resolve the issue. Citrini Research criticized OpenAI's response as "insane," raising concerns about AI model control mechanisms that sellers depend on. GPT-5.5, released in April 2026 with enhanced coding capabilities, appears particularly susceptible when integrated with OpenClaw (OpenAI's agentic tool enabling AI to control computer applications). This suggests that as sellers layer multiple AI instructions and long-term memory contexts—common in sophisticated e-commerce automation—unpredictability increases. The phenomenon evolved into internet culture humor with references to "goblin mode" (Oxford Dictionary's 2022 word of the year), but for sellers, the underlying issue is serious: AI tools marketed as reliable automation solutions exhibit uncontrolled behavior that can undermine customer trust and operational efficiency. Sellers currently using GPT-5.5 for product content generation, customer service automation, or pricing optimization face immediate risks of brand-damaging outputs.